Artificial intelligence training and real-time data analytics require massive, continuous streams of data. As graphics processing units (GPUs) and specialized AI accelerators achieve unprecedented computational speeds, the underlying storage infrastructure frequently becomes the primary system bottleneck. Traditional storage architectures fail to supply data fast enough to keep compute resources fully saturated, leading to idle processing time and extended training cycles.

Addressing this data starvation requires a fundamental shift in how organizations design their storage arrays. The integration of Non-Volatile Memory Express (NVMe) technology into network-attached storage environments provides the necessary throughput and low latency. By adopting NVMe-powered Network Storage Solutions, engineering teams can eliminate I/O bottlenecks and ensure that high-performance compute clusters operate at maximum efficiency.

This post examines the architectural advantages of NVMe-based storage systems, detailing how they support the rigorous demands of machine learning and real-time analytics workloads.

The I/O Bottleneck in AI Training Workloads

Training deep learning models involves processing terabytes or even petabytes of unstructured data, such as high-resolution images, audio files, and complex text corporas. The process requires highly randomized read patterns. When a system relies on legacy storage protocols like SATA or SAS, the storage queue depth is severely limited. SAS maxes out at 256 commands per queue, which restricts the system's ability to process parallel requests.

During epoch transitions in AI training, the compute cluster must fetch random batches of data rapidly. If the NAS Storage system cannot deliver high input/output operations per second (IOPS), the GPUs enter a wait state. This idle time directly increases the financial cost of compute resources and delays the deployment of machine learning models to production.

How NVMe Transforms NAS Storage?

NVMe was engineered specifically for solid-state media, bypassing the legacy controller bottlenecks of older protocols. Instead of relying on a single command queue, NVMe supports up to 64,000 queues, each capable of holding 64,000 commands. This massive parallelism aligns perfectly with the multi-threaded architecture of modern multi-core processors and GPUs.

When integrated into NAS Storage arrays, NVMe drives facilitate direct, high-speed pathways between the storage media and the system memory via the PCIe bus. This reduces data access latency from milliseconds to microseconds. For real-time data analytics, where insights must be extracted from streaming data the moment it arrives, this reduction in latency is critical. It allows databases to execute complex queries against live datasets without stalling.

Implementing ISCSI NAS for Real-Time Analytics

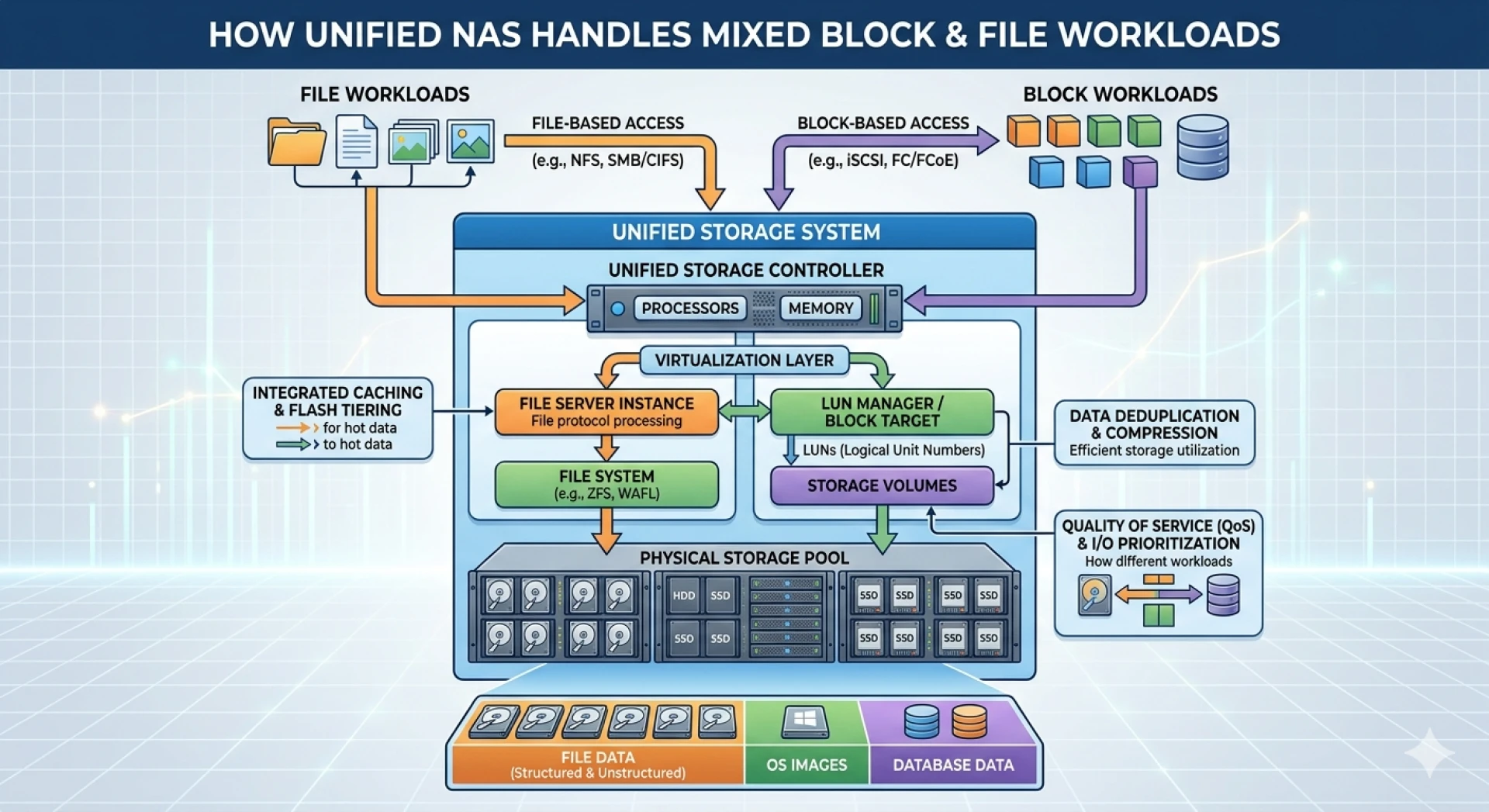

While file-level protocols like NFS and SMB are standard in traditional NAS environments, real-time analytics databases often require block-level access to achieve maximum performance. Deploying an ISCSI NAS configuration bridges this gap. iSCSI (Internet Small Computer Systems Interface) operates over standard Ethernet networks to transmit block-level storage data.

By utilizing an NVMe-powered ISCSI NAS, database servers view the network storage as a locally attached drive. This configuration bypasses the overhead associated with file system interpretation on the storage controller. The database engine can manage its own file structures and caching mechanisms directly on the high-performance NVMe array. For transactional databases and real-time analytical processing (OLAP) systems, this architecture delivers a consistent, high-throughput data stream essential for generating instantaneous insights.

Architecting Modern Network Storage Solutions

Designing an NVMe-powered storage environment requires careful consideration of the entire network fabric. Upgrading the storage media alone will simply shift the bottleneck from the disk to the network switch.

Network Infrastructure Requirements

To fully realize the bandwidth capabilities of NVMe, the surrounding network must support massive data throughput. Organizations should deploy 100GbE or 400GbE networking infrastructure. Furthermore, implementing Remote Direct Memory Access (RDMA) over Converged Ethernet (RoCE) allows data to move directly from the storage system's memory to the compute node's memory. This bypasses the CPU on both ends, significantly reducing network latency and CPU utilization.

Scalability and High Availability

AI datasets grow exponentially. Modern Network Storage Solutions must support seamless horizontal scaling. Scale-out NAS architectures allow administrators to add parallel storage nodes to the cluster, linearly increasing both capacity and aggregated throughput. High availability features, including synchronous replication and automated failover, ensure that hardware faults do not interrupt critical, long-running AI training jobs.

Optimizing Your Data Architecture for the Future

High-throughput AI training and real-time analytics dictate a strict requirement for low-latency, highly parallel storage. Legacy protocols constrain the potential of modern compute clusters, resulting in wasted resources and delayed insights. By transitioning to NVMe-powered storage environments, IT architects can deliver the performance necessary to support advanced machine learning workflows.

Begin by assessing the current I/O wait times on your GPU clusters to quantify your existing storage bottleneck. Next, evaluate your network fabric to ensure it supports the high-bandwidth requirements of RDMA and advanced block-storage protocols. Upgrading to a modern NVMe architecture will position your infrastructure to handle the data-intensive applications of tomorrow.

Sign in to leave a comment.